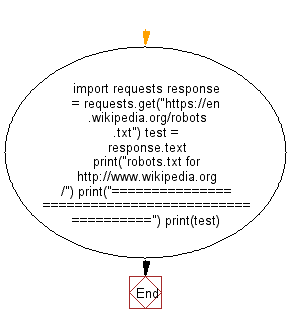

Elias Dabbas on Twitter: "XML sitemap trick: >>> import advertools as adv >>> all_indexes = adv.sitemap_to_df("https://t.co/RFZMNIRSaK", recursive=False) To get all available sitemap files, first level only, automatically extracted ...

GitHub prevents crawling of repository's Wiki pages - no Google search · Issue #1683 · isaacs/github · GitHub

Ant on Twitter: "@whereisaaron @JezCorden I believe the bigger issue is they're aiming to have users go to these ChatGPT/OpenAI-backed services to get their answers first and just avoid search engines altogether.

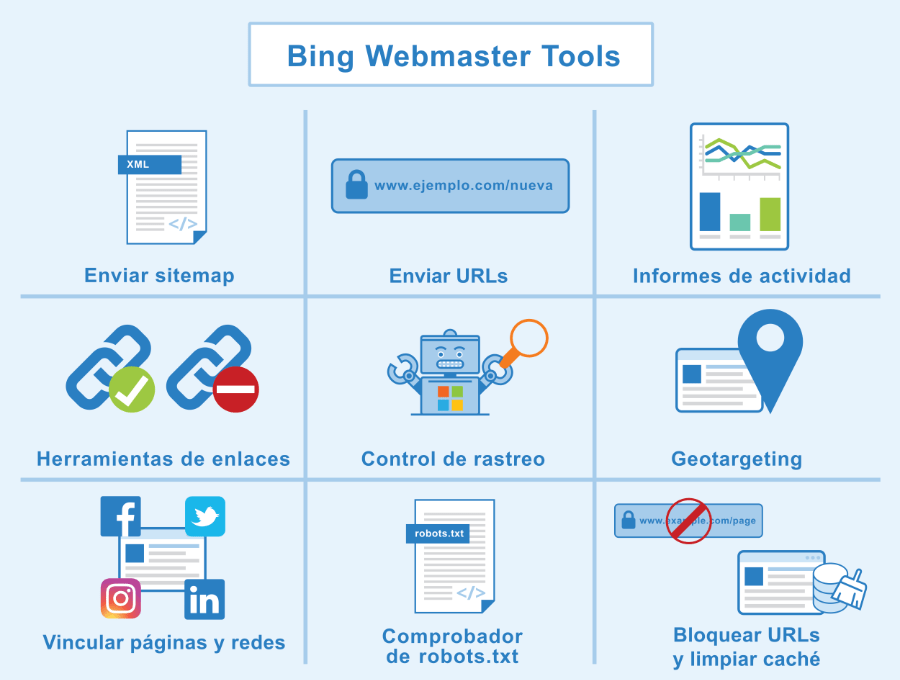

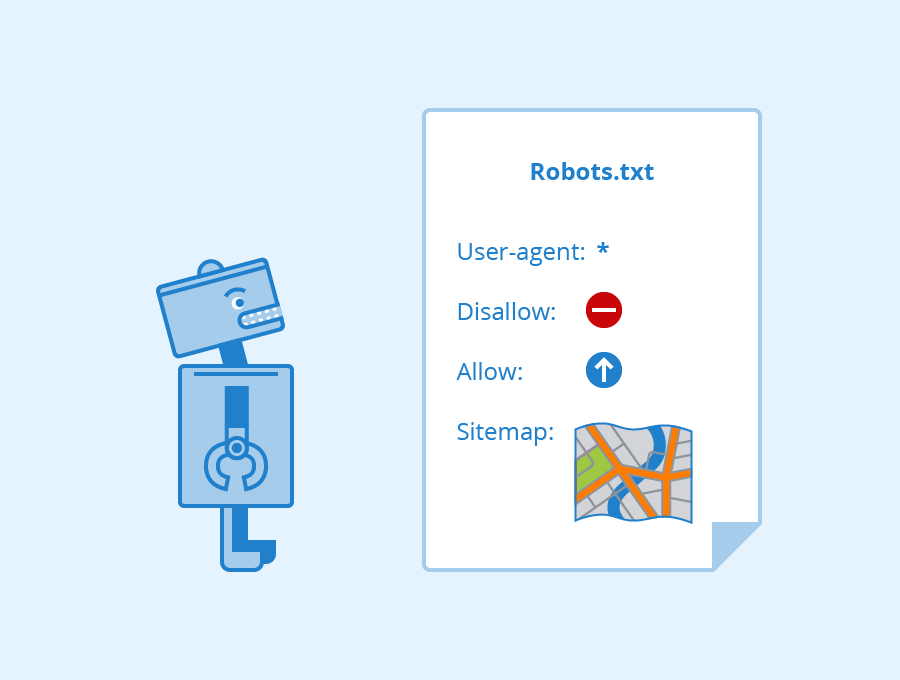

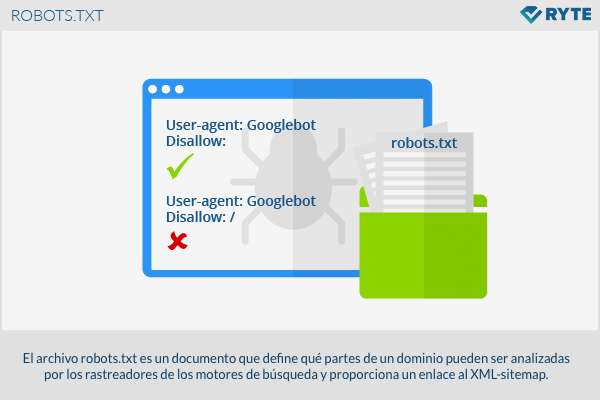

![Qué es Robots.txt? Guía Actualizada [2022] Qué es Robots.txt? Guía Actualizada [2022]](https://www.factoriacreativabarcelona.es/wp-content/uploads/2022/02/ejemplo-archivo-robots-txt-2022.png)